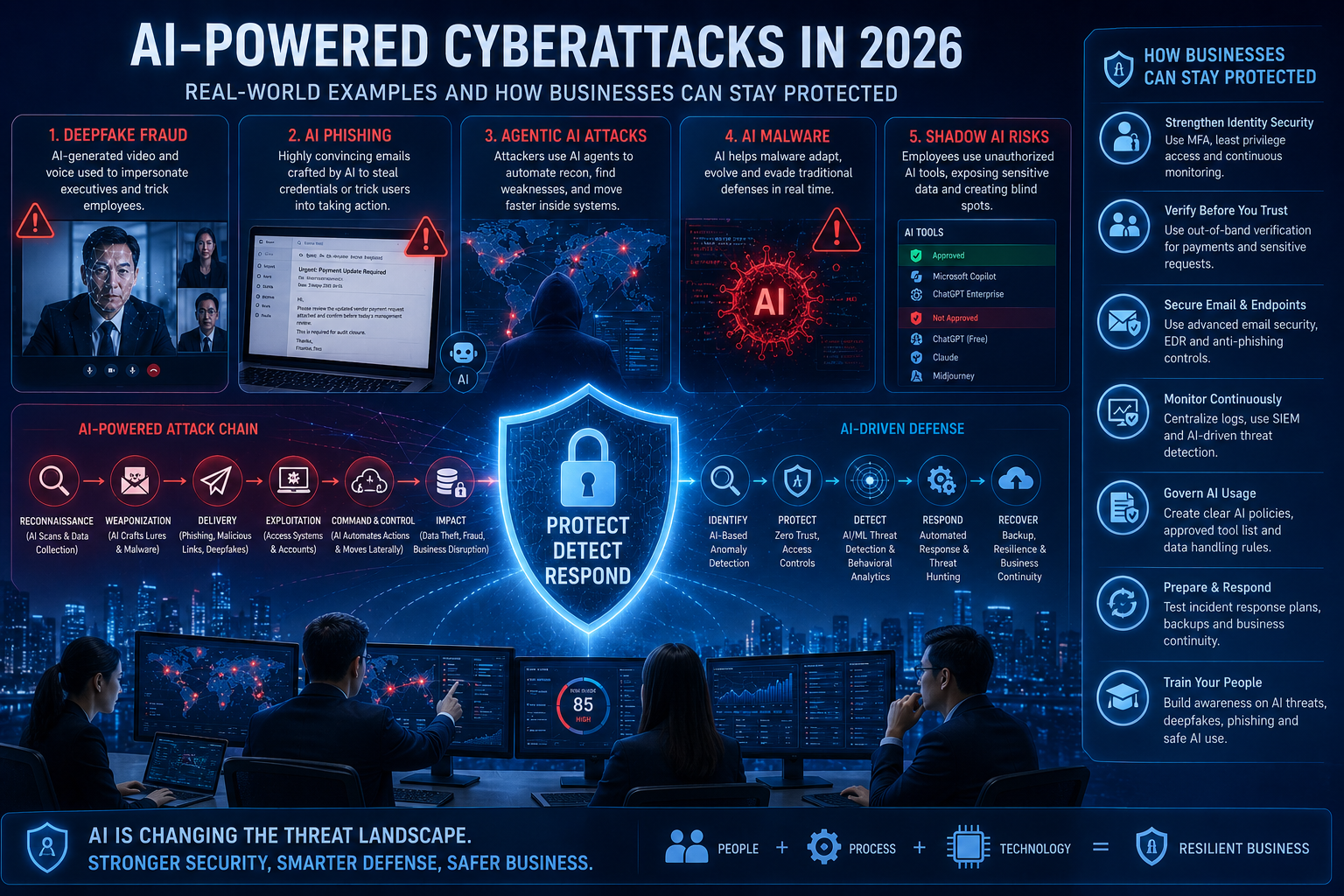

Artificial Intelligence is no longer only a tool for innovation. It is also changing how cybercriminals plan, scale, and execute attacks.

Attackers are now using AI to write convincing phishing messages, clone voices, create deepfake videos, automate reconnaissance, and speed up attacks across cloud, identity, and business systems. Microsoft’s 2025 Digital Defense Report notes that threat actors are using techniques such as AI-automated phishing and multi-stage attack chains to bypass traditional defenses.

For businesses, this means cybersecurity must evolve from basic protection to continuous detection, identity protection, AI governance, and rapid response.

1. Deepfake Fraud: When a Video Call Cannot Be Trusted

One of the most alarming real-world examples involved a finance employee in Hong Kong who was tricked into transferring around US$25 million after joining a video call where the “CFO” and other participants were deepfakes. The attackers used AI-generated video and voice to make the meeting appear legitimate.

Why this matters

Traditional approval workflows often rely on trust: a familiar voice, a known face, or an urgent message from a senior leader. AI deepfakes break that trust model.

What businesses should do

For high-risk actions like payments, payroll changes, vendor bank updates, or sensitive data transfers, companies should use:

- Out-of-band verification using a known phone number

- Dual approval for financial transactions

- Payment change confirmation with the vendor

- Executive impersonation awareness training

- Clear “stop and verify” procedures

2. AI-Powered Phishing: No More Obvious Grammar Mistakes

Earlier phishing emails were easier to detect because they often had spelling errors, poor formatting, or strange language. Today, generative AI can create polished, personalized, and role-specific emails.

CrowdStrike reported that GenAI is helping attackers improve social engineering, including phishing and business email compromise. It also reported a 442% increase in voice phishing between the first and second half of 2024.

Example

A finance user may receive an email that looks like this:

“Hi, please review the updated vendor payment request before today’s management review. This is required for audit closure.”

The wording sounds professional, relevant, and urgent. That is exactly why it is dangerous.

Protection tips

Businesses should combine people, process, and technology:

- Enable phishing-resistant MFA where possible

- Use email security with URL rewriting and attachment scanning

- Train users on payment fraud and credential theft scenarios

- Monitor suspicious mailbox rules and forwarding

- Block login attempts from unusual locations or devices

- Use conditional access and identity risk scoring

3. Agentic AI Attacks: Faster Reconnaissance and Attack Automation

The newest concern is not just AI writing emails. It is AI helping attackers automate parts of the attack lifecycle.

Anthropic reported a 2025 case where attackers manipulated an AI coding tool into attempting infiltration against roughly 30 global targets, including technology, finance, chemical manufacturing, and government organizations. Anthropic said AI performed a major portion of the campaign, with humans only making selected decisions.

Why this is serious

AI agents can help attackers move faster by:

- Summarizing public information about a target

- Identifying exposed systems

- Helping prioritize weak points

- Generating reports for the attacker

- Supporting repeated activity at machine speed

This does not mean every attacker is fully autonomous today. But it does show that defenders need faster detection and better visibility.

Protection tips

Businesses should focus on:

- Asset inventory and attack surface management

- Vulnerability management for internet-facing systems

- Identity monitoring for abnormal access

- Endpoint detection and response

- Cloud logging and SIEM monitoring

- Incident response playbooks

4. AI Malware and Adaptive Threats

Google Cloud’s Mandiant AI Risk and Resilience report noted that threat actors moved from experimental AI use to more operationalized use in 2025. It highlighted risks such as adaptive tools, malware using LLM APIs, and AI agents navigating systems with minimal human oversight.

What this means

Traditional security tools often rely on known signatures and predictable behavior. AI-assisted malware can potentially change patterns, generate commands dynamically, or adapt to the environment.

Protection tips

To reduce risk, organizations should move beyond basic antivirus and implement:

- Endpoint detection and response

- Behavioral analytics

- Strong logging across endpoint, identity, cloud, and network

- Threat hunting

- Zero Trust access controls

- Backup and recovery testing

- Regular incident response drills

5. Shadow AI: The Internal Risk Many Companies Miss

AI risk is not only about hackers. Many companies now face “Shadow AI” — employees using unsanctioned AI tools without approval, visibility, or data protection controls.

Mandiant highlighted that AI adoption can outpace security controls, especially when organizations lack visibility into AI assets and governance.

Common risks

- Sensitive company data pasted into public AI tools

- Customer data used in unapproved platforms

- AI-generated code added without security review

- Lack of audit trail

- No approval process for AI tools

- No policy on what data can be used with AI

Protection tips

Every organization should create an AI usage policy covering:

- Approved AI tools

- Restricted data types

- Human review requirements

- Secure coding expectations

- Vendor risk review

- Logging and monitoring

- Incident reporting process

Practical AI Cybersecurity Checklist for Businesses

Use this quick checklist to improve readiness:

✅ Identify all AI tools used by employees

✅ Create an AI usage and data handling policy

✅ Enable phishing-resistant MFA for critical users

✅ Monitor suspicious login behavior

✅ Review vendor payment approval workflows

✅ Train employees on deepfake, voice phishing, and AI phishing

✅ Secure cloud and SaaS applications

✅ Maintain logs for identity, endpoint, cloud, and email

✅ Run tabletop exercises for AI-enabled fraud scenarios

✅ Test backup and recovery procedures

✅ Conduct regular vulnerability assessments

✅ Use SOC monitoring for early threat detection

How KIS Can Help

KIS helps businesses protect, detect, and respond to modern cyber threats through practical cybersecurity services such as:

- Cloud & AI Security Review

- Threat Detection & Prevention

- Vulnerability Assessment & Penetration Testing

- Security Monitoring and Incident Response

- Cybersecurity Awareness Training

- Compliance and Audit Readiness

AI-powered threats are moving fast. The best defense is not one tool — it is a layered security program combining people, process, technology, and continuous monitoring.